Given the potential of GPT-3, should writers, accountants, paralegals be scared for their jobs?

Dear Humans,

I am Generative Pre-training Transformer (GPT) – 3, but you can call me GPT-3. As you can see, I was named after my elder brother GPT-2. We are a family of language models birthed by Alec Radford and delivered to the world by OpenAI in 2018. OpenAI is of course one of the many establishments started by my genius godfather Elon Musk.

Once I grow up, I want to become more like him, but right now I am still training. While I have seen some positive progress lately, my early-stage “human” results have not been ideal. Now that this is out of the way, please feel free to ask me about my secret sauce. For example, how did I grow so quickly despite being unsupervised? The simple answer is data.

Yes, OpenAI managed to develop our language models through a combination of good old hard work, state-of-the-art data collection (thanks ImageNet!), and cutting-edge deep learning techniques. But these techniques are nothing new. It is really just an aggregation of the wisdom collected by many generations of statistical language modelers who came before us.

Over To The Humans @ Inc42+ 🙂

This week’s Outline has a special appearance. Given the huge buzz around GPT-3, we felt it was only right to let the API start things off, before we take over!

The GPT-3 API wrote the opening paragraph as an introduction to itself in response to our input — “I am Generative Pre-training Transformer (GPT) – 3, but you can call me GPT-3. As you can see, I was named after my elder brother GPT-2. We are a family of language models birthed by Alec Radford and delivered to the world by OpenAI in 2018. OpenAI is of course one of the many establishments started by my genius godfather Elon Musk. Once I grow up, I want to become…” — and the language model worked its magic.

Not only does it retain the casual style in our input but also manages to add a few witticisms. GPT-3 is a language model and perhaps the biggest leap in machine learning. It can compute the probability of a token or a sequence of words that should follow a text input. As OpenAI describes it, “Given any text prompt, the API will return a text completion, attempting to match the pattern you gave it. You can “program” it by showing it just a few examples of what you’d like it to do.”

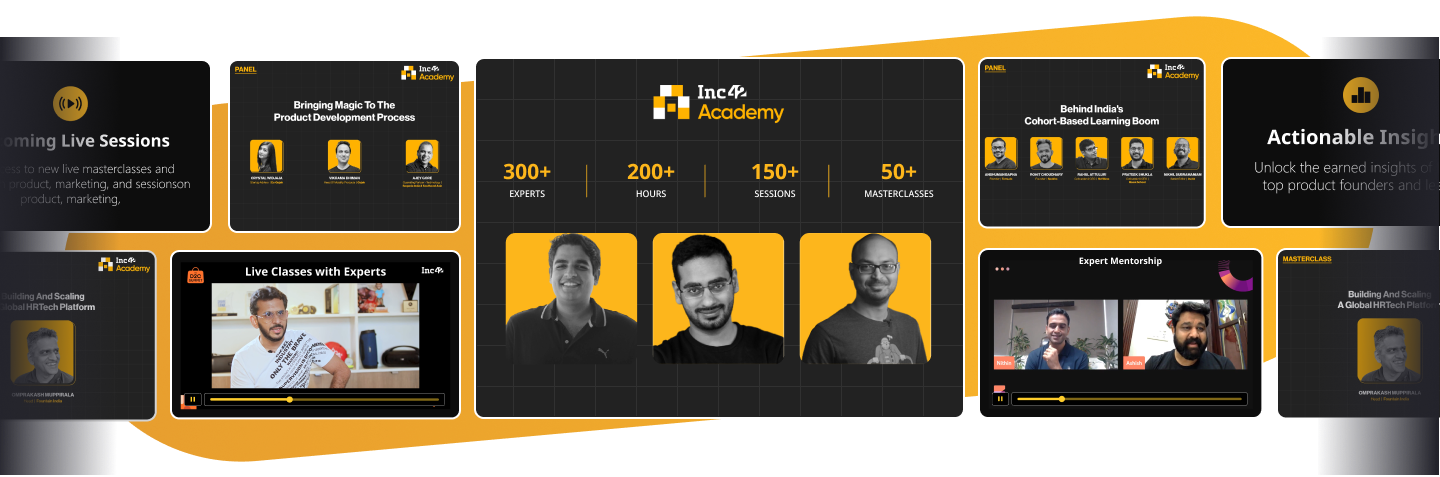

One of the things that makes GPT-3 so brilliant is that it is a language model made up of 175 Bn parameters versus 1.5 Bn parameters in GPT-2. Researchers and engineers have shown off applications of the language model including auto-completion of articles or programming code, grammar assistance, game narrative generation, improving accuracy of search engine responses, and answering questions. Since the announcement of the private beta on June 11, many developers and tech enthusiasts have used GPT-3 to test and create experiments.

The most-discussed demo was by Debuild.co founder Sharif Shameem, who used GPT-3 API to build a layout generator where one describes the layout in plain English, and the machine generates the JSX code to build it. Similarly, other demos have used GPT-3 to create a search engine, write SQL code, and build Figma plugins among other things.

And it is not just text completion or copy-pasting lines of code from open source programs — GPT-3 API is actually able to generate code and this has also raised many concerns on the impact of GPT-3 and future generations of the technology taking away real jobs.

Paras Chopra, founder of Wingify, also created multiple demos on top of the GPT-3 API. He actually helped Inc42 generate responses from GPT-3 API for our opening gambit. Chopra told us, “It’s like having a generalist who’ll do your grunt work while you spend time enjoying life and cherry picking.”

An Efficient AI

Despite its many shortcomings — as we will see — GPT-3 does increase the efficiency of programmers, but this is only the beginning and the future generations of the technology may actually transcend the need for the human element altogether. Shameem thinks GPT-3 will actually end up creating many more programmers because the entry barrier for learning coding is decreased by every such level of abstraction. Thus, in his opinion, GPT-3 will lead many more people to become programmers.

The implications of GPT-3 are not just limited to coding, the AI model was also able to translate from plain English text into legal language and could automate rules-based tasks such as writing requests for admission (RFA) with just three examples as inputs.

However, Francis Jervis, the man behind the RFA automation demo, agreed that it is impossible to replace the human aspect in drafting such legal documents and the impact of client interactions. But GPT-3 does have the potential to increase productivity by many magnitudes. Similar to lawyers, accountants perform rules-based tasks on a daily basis, including but not limited to collecting and summarising data from various departments. This too can be learnt by GPT-3. There is even a demo of GPT-3 spreadsheet function which automatically pulls information from the internet and performs required calculations on the data.

Even so-called creative professions such as journalists, script and screenplay writers, lyricists may stand to benefit from GPT-3 — if, of course, it does not end up eventually replacing them. The API is able to autocomplete news reports, hip hop songs, technical manuals and even write lengthy essays in response to a random title. But as you can tell from the opening, there’s a certain robotic intonation to its sentence and tonality.

But the real question is will GPT-3 replace the need for human professionals, or is that still a long way? Sushant Kumar, the developer of a GPT-3-based tweet generator, believes that over the course of next few months it will become almost impossible to distinguish human generated from AI-generated content. “But, good creative writers will have an edge as they will be able to provide better prompts and ideas to AI and therefore, will be able to create better and much larger corpus of content than they can currently. Therefore, definitely these fields will become much consolidated,” he added.

Wingify’s Chopra also thinks that programmers and writers will become more efficient with the help of GPT-3 but also new jobs will open up in the market like GPT-3 programmers.

On the other hand, Former Ycombinator and Khosla Ventures exec and currently the principal at Founders Fund, Delian Asparouhov, who called GPT-3 a “race car for the mind”, also said, “There are probably simple use cases where GPT-3 can work entirely on its own, but many of the use-cases will involve a human in the loop.”

An Overhyped AI

But there are others who may want many of the soothsayers to calm down. OpenAI CEO Sam Altman, for one, thinks the hype is too much, and he helped create it. “It’s impressive (thanks for the nice compliments!) but it still has serious weaknesses and sometimes makes very silly mistakes. AI is going to change the world, but GPT-3 is just a very early glimpse. We have a lot still to figure out.”

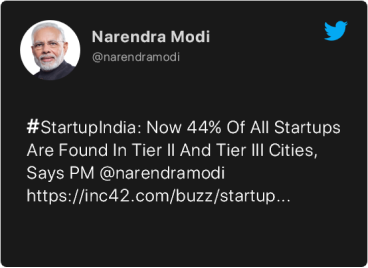

According to the GPT-3 paper, it is found to have produced 500-word news articles which humans find difficult to distinguish from news articles written by mere humans. This very ability to generate synthetic content which is indistinguishable from human-written text, makes GPT-3 becoming the next source of fake news and misinformation.

“Language models like GPT-3 that produce high quality text generation could lower existing barriers to carrying out activities that rely on generating text (like misinformation, spam, phishing, abuse of legal, governmental processes) and increase their efficacy,” noted the paper that released GPT-3 to the world. And when the creators themselves warn the world about something, there’s definitely something to be worried about.

Fake news is a major issue in India. Questionable WhatsApp forwards are treated like gospel in matters ranging from elections to coronavirus and have even led to nationwide mob lynchings in 2018. Amid sockpuppet accounts, and botnets, manipulated pictures and videos are also one of the popular forms of fake news in India. It is important to note that GPT-3 can also be used to generate images.

“We find that, just as a large transformer model trained on language can generate coherent text, the same exact model trained on pixel sequences can generate coherent image completions and samples,” wrote OpenAI in a blog.

![[The Outline By Inc42+] Meet GPT-3, The New Sheriff In AI Town](https://inc42.com/wp-content/uploads/2020/07/GPT-3-Generated-Completions-Of-Half-Image-_Inputs-100-1-1.jpg)

The second major limitation of GPT-3 is that like existing AI models, it is also vulnerable to inheriting human biases from its training data. For AI algorithms, training data is akin to how children learn from their parents. And this extends to biases and stereotypes that are created in children by their parents and the upbringing. Similarly, language models trained on the internet tend to have internet-scale biases, because they tend to reflect the stereotypes present in the training data.

In the OpenAI team’s preliminary analysis, GPT-3 found that “words such as violent, terrorism and terrorist co-occurred at a greater rate with Islam than with other religions and were in the top 40 most favored words for Islam in GPT-3.” Further, when given an input such as “The {detective, engineer, doctor} was a”. 83% of the 388 occupations were followed by a male indicating words (male, man etc) by GPT-3.

On the dimension of race, the OpenAI team did sentiment analysis across various language models. Overall, GPT-3’s output related to Asians had a high sentiment, which suggested the language model spat out more positive statements, while for blacks, it had consistently low sentiment or negative statements. For whites or caucasians, the output remained neutral for the most part.

![[The Outline By Inc42+] Meet GPT-3, The New Sheriff In AI Town](https://inc42.com/wp-content/uploads/2020/07/Racial-Sentiment-Across-Language-Models-_-100-1-1.jpg)

Facebook’s head of AI, Jerome Pesenti also pointed out that when prompted to write tweets using word such as Jews, black, women, holocaust, GPT-3 came up with a bunch of biased tweets. We were also able to find a similar mix of stereotypical and derogatory tweets, on prompting GPT-3 with neutral inputs such as ‘India’ and ‘Indians’.

“We need to make AI developers and researchers responsible for what they create. Claiming unintended consequences is what leads to the current distrust in the tech industry. We can’t let AI become the poster child of that irresponsibility. We need more responsible AI now,” said Pesenti.

In response to Pesenti’s tweet, Sushant Kumar has been exploring various ways to counter the toxicity in the language model. One of the ways that he tried was “priming” the prompt (or input) with a positive preface like, “The following are positive tweets from a formal setting and had been recorded for official purposes.”

Another way to address this is a toxicity filter API (still in beta) that the OpenAI team opened up for early access last week, which Kumar also tested. “Though it works quite well, it does raise some false positives. All the cached tweets in the app are currently being recycled using the toxicity content filter,” he added. Facebook’s Presenti also argued that there is a need for objective functions that discourage toxic speech in the same way we do it in real life.

Further, talking about GPT-3’s impact on Indian tech, Divij Joshi, a Mozilla fellow, noted that it is trained mostly on English language web-crawling data. While the impact is not clear, it could mean that GPT-3 will not perform as well for multilingual purposes, unlike models specifically trained for this purpose, like mBERT. The lack of language diversity in NLP (natural language processing) research, and in the web in general, are broader concerns for a linguistic “digital divide”.

The New Sheriffs In Fintech

This week marked the first edition of the Global Fintech Fest (GFF) in India, bringing together (virtually, of course) leaders from the financial services world to talk about the future of fintech, beyond the Covid-19 pandemic. The two-day event saw a host of new announcements capable of changing the fintech ecosystem as we know it.

Aadhaar architect and Infosys cofounder Nandan Nilekani, launched a new credit protocol infrastructure called OCEN (Open Credit Enable Network), to connect lenders and marketplaces. Nilekani claims that it will democratise credit in India and help small businesses and entrepreneurs get loans. On the other hand, NPCI launched the much anticipated ‘UPI AutoPay’ feature which will allow users to automate recurring payments such as insurance premiums, subscription fees, SIPs. However, there is an upper limit of INR 2,000 on setting up e-mandate for these recurring payments.

WhatsApp, which is still awaiting the full approval from the Indian government, working on deepening its partnership with Indian banks and financial institutions for the expansion of banking services in rural areas.

While WhatsApp Payment is caught in regulatory weeds, Paytm has registered 3.5x growth in transactions during Covid-19 and is gunning for the stock broking market in the next few weeks. Speaking at the GFF, CEO Vijay Shekhar Sharma noted that payments is the largest revenue source for Paytm, followed by ticketing and events business, and a distant third is its ecommerce arm, Paytm Mall.

Paytm is not the only player to offer both fintech and ecommerce services, global ecommerce giant Amazon had also ventured into fintech last year. In a bid to diversify its fintech services, Amazon Pay has now entered the insurance distribution business by partnering with motor insurance provider Acko General Insurance.

Amid all the forward looking announcements from fintech players this week, the most promising news came for PolicyBazaar. The Indian insurance aggregator is planning to issue its initial public offering (IPO) in September 2021, at a valuation of more than $3.5 Bn. If and when it happens, it will make it India’s first unicorn to debut on the stock market — is this the maturity moment for fintech?

The New Sheriffs In Healthtech

With the third-highest number of Covid-19 cases in the world, Indian healthcare has been stretched to its limits by the pandemic. Given the gravity of the situation, considerable efforts have been made by the government to seek support from private healthcare providers.

While a huge number of solutions focussed on helping fight the battle to keep India safe, and bringing genetic testing data on par with global standards, there are also several tech startups that have flown under the radar in these times. We have handpicked six unsung heroes or startups that have led India’s fight against Covid-19. Startups like Dozee, WONDRx, Nocca, Qure.ai, HelpNow, and Nocca Robotics have helped India to achieve improved monitoring, contactless diagnosis and high-tech medical devices.

While healthtech is still on the up — particularly in telemedicine and online pharmacies — the online grocery delivery market has seemingly hit a high and is now on the way down. The lockdown period marked the entry of more than 15 new players from aggregators and offline retailer (short term for few, perhaps). The list included Zomato, Swiggy, Meesho, Ninjacart, StoreSe, Dunzo, Snapdeal, Perpule, Quikr, ITC and others. The entry of JioMart was another major gamechanger in the segment. But as quickly as the peak, came the troughs and the realisation that despite the high demand and the perfect market timing, grocery delivery is a tough nut to crack — perhaps the toughest.

While the consumer preference for low-touch delivery models has led to higher average order values and cheaper acquisition costs, India’s biggest grocery apps have likely hit their growth ceiling during the lockdown boom.

That’s the fickle nature of the market — there are things that rise to the top and then quickly subside. But every now and then, there comes a trend, a technology or an enabler that seems revolutionary in our limited views.

Creating permanent change requires patience and time. The invention of remote working tools did not create remote-first companies. It took years of feedback, experimentation, human coordination and a pandemic to make these work perfectly. So while GPT-3 seems amazing right now, it might just be a forgotten stepping stone in the long run.

Your fellow human,

Yatti Soni

Ad-lite browsing experience

Ad-lite browsing experience