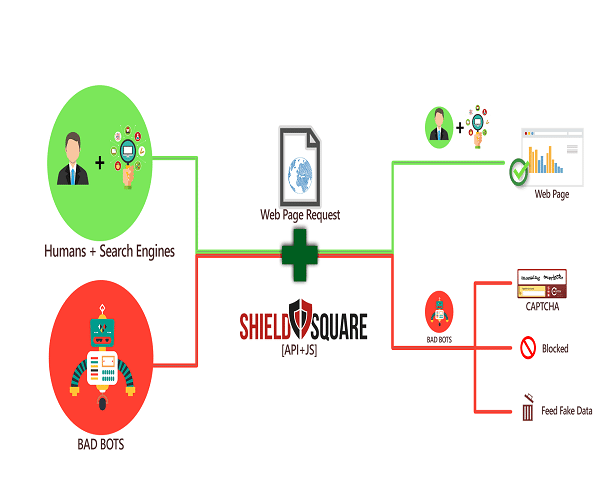

Dawn of the Internet era saw the emergence of computer viruses. And, anti-virus programs were developed to counter virus threats. Now there’s an added menace – bad bots, the automated malicious codes written by sophisticated programmers. They target specific websites to execute ‘scraping’, ‘content theft’, ‘form spam’, and ‘skew website analytics data’. To overcome this nightmare of website owners globally, Pavan Thatha along with his brother Rakesh decided to build ShieldSquare to make a real-time bot prevention solution.

Since its launch in 2013, Bangalore based ShieldSquare, has blocked over 9 billion bad bots, protecting 4 billion+ web pages to date. The founding team included Vasanth Kumar Gopalakrishnan, Srikanth Konijeti and Jyoti Kakatkar, apart from Thatha brothers. It was incubated at Microsoft Ventures Accelerator, Bangalore.

With a 50 member team, Shieldsquare has managed to onboard clients from over 68 countries. It is currently working with leading companies such as Seloger.com (part of Axel Springer Group) in France, Navent in Latin America, Magicbricks in India and more.

Why Web Scraping Bots Are A Headache?

Of all kinds of bots such as theft bots, fraud bots, site abuse bots, etc., web scraping bots are the most troublesome. Web scraping is most common bot which is used against ecommerce marketplaces and on media websites that produce rich, unique and proprietary content. On one side, by stealing pricing information, it makes the ecommerce competitors vulnerable, while for media sites, it affects brands competitiveness, resulting in revenue loss.

As per the 2015 Bad Bot Landscape Report, China leads the world in bad bot mobile traffic. Globally, a whopping 41% of bad bots mimicked human behavior, and only 7% of bad bots disguised themselves as good bots (e.g., Googlebot and Bingbot). As per another report, on any given day, over 90 percent of all security events on our network are the result of bad bot activity.

In simple terms, for every one human visit on any site, there will be one bot visit on the site. And majority of this bot traffic is malicious.

ShieldSquare’s Approach To Protect Website Content

ShieldSquare collects, analyses and detects bots in real time on the basis of their behavioural pattern on the website. Various parameters are collected about the bot’s execution state and environment, which results into a bot fingerprint UUID (Universally Unique Identifier).

This UUID is used to identify the bot, instead of an IP. This is its unique proposition to effectively identify human vs. good bots vs. bad bots while ensuring zero false positives.

For example, if a programmer launches a bot to scrape abc.com while sitting at a cafe. And at the same place, a genuine user logs in to abc.com for browsing purposes. Going the old way, either both of them will get blocked, or they both will pass, as they shared the same IP address.

However, since ShieldSquare uses UUID instead, it will block the malicious bot and passes the genuine user IP. In case the programmer returns with the new bot framework, the new bot’s pattern is recognised and a new UUID is generated thereby blocking it again.

Why ShieldSquare Is Unique?

Existing web security solutions, such as Web Application Firewalls (WAF), will protect websites against threats such as SQL Injection, XSS/DDoS attacks, and application vulnerabilities. However, they are ineffective when it comes to protecting content from automated bots, much less so against the aforementioned bot threats. Moreover, WAF solutions lack the adaptability to stop emerging bot threats.

Effectively, there are not much startups working in the space of bot prevention. Globally, one major competitor for ShieldSquare is Distil Networks, which monitors and filters traffic at the network level using DNS based routing.

On the other hand, ShieldSquare helps online business owners to detect and prevent bad bots in real-time, without the need for DNS redirection. Narendran Vaideeswaran, Director, Product Marketing, explained this as, “Unlike the DNS re-routing technique used by other bot detection products in the market, ShieldSquare uses an API-based approach to protect website from bots, ensuring data privacy and seamless integration with the existing infrastructure.”

Technologies Used At ShieldSquare To Identify Bots

For bot detection, ShieldSquare uses technologies such as Dynamic Turing tests – a method for determining whether or not a computer is capable of thinking like a human, Device fingerprinting, User behaviour analysis, IP Tracking Tests and more.

Here is a video prepared by Wildbeez Interactive that showcase how ShieldSquare actually works to prevent web scraping and other malicious activities.

To identify bots from simple to complex, ShieldSquare requires a few page hits to understand bot traffic, using one or more of the above rules, before it can mark the traffic as bad. This is also to ensure that there are no false positives.

The Initial Hurdles Faced

As said by Pavan, initially the product was not fully self-serve and they had to handhold customers during the integration. He further added, “Though that approach worked to get our initial customers in India, we couldn’t use that approach to scale outside our home base.”

Later, the team worked on making the product integration extremely simple and straight-forward. Customers can now integrate ShieldSquare’s solution without even talking to them.

This helped them move across geographic boundaries and gain traction in multiple countries.

While working with clients, they also realised that each customer’s infrastructure is different and they want more options to choose from in terms of integrating their solution that suits their business logic/requirements. “So we launched Web server plugins for customers who want to integrate our solution at web server level itself. We have also launched an on-premise solution for customers who want to deploy our solution within their private cloud,” explained Pavan.

Further, they also started giving different protection modes to customers in terms of how they can deal with the detected bot traffic. Customers can choose the real-time protection mode when they want to take action on bots instantaneously at the server or application level. Customers can opt for the feed-based protection mode if they want bot prevention at the CDN (Content Delivery Network) or WAF level.

Becoming Cash Flow Positive In Q1 2016

In Q1 this year, the annual revenue rate (ARR) grew by 3 times and average revenue per account (ARPA per year) doubled to $10,000. As highlighted by Pavan, the initial strategies to focus on getting leads via inbound channels, incentivising customers and it’s free tools- ScrapeScanner and BadBot Analyser, which played a critical role in building strong fundamentals for further growth.

“The more sustainable way to reach positive cash flow is to grow revenues. There are certain things that might seem unimportant, but rather carry great value. Our employees at Bangalore enjoy free breakfast, lunch and snacks, while the same will be implemented soon for our folks in the Chennai, who are already beating the heat with the free tender coconuts we offer twice a day,” added Pavan.

The Road Ahead

ShieldSquare has already raised $300K in angel funding. Its investors include Freshdesk founder Girish Mathrubootham and the three co-founders of RedBus. StartupXseed Ventures LLP, has also invested an undisclosed amount in the startup under its venture fund, Aaruha Technology.

It is now targeting to have a stronger presence in the US. “We are already making our presence as a sponsor in key events (like Shoptalk), and planning to participate in more such events,” said Pavan.

The team also intend to cater to more sites by launching WordPress and Drupal plugins later this year.

“To counter the emerging bot threat landscape, we have developed cutting-edge technologies that combine intelligent algorithms and enhance them with our data science team’s expertise. This helps us stay one step ahead of the bots, and that’s one of the reasons our customers appreciate our product,” added Pavan.

Editor’s Note

With more than half of the internet traffic from non-humans, there is lot of cleanup to be done. The number of Internet users are increasing exponentially as well as the number of online web portals, ranging from ecommerce and online content generation to airline ticketing.

Good bots are the worker bees of the internet that assist its evolution and growth. On the other hand, bad bots are the malicious intruders that swarm the internet and leave a trail of hacked websites and downed services. Although, tech is playing its role to combat the menace of these bots, still there is requirement of some ground rules to be formed.

Ad-lite browsing experience

Ad-lite browsing experience