SUMMARY

The company claims to have a safety budget greater than Twitter’s annual revenue

Facebook is using a combination of AI and human content reviewers to detect hate speech and other platform violations

Systems are capable of detecting 40 different languages

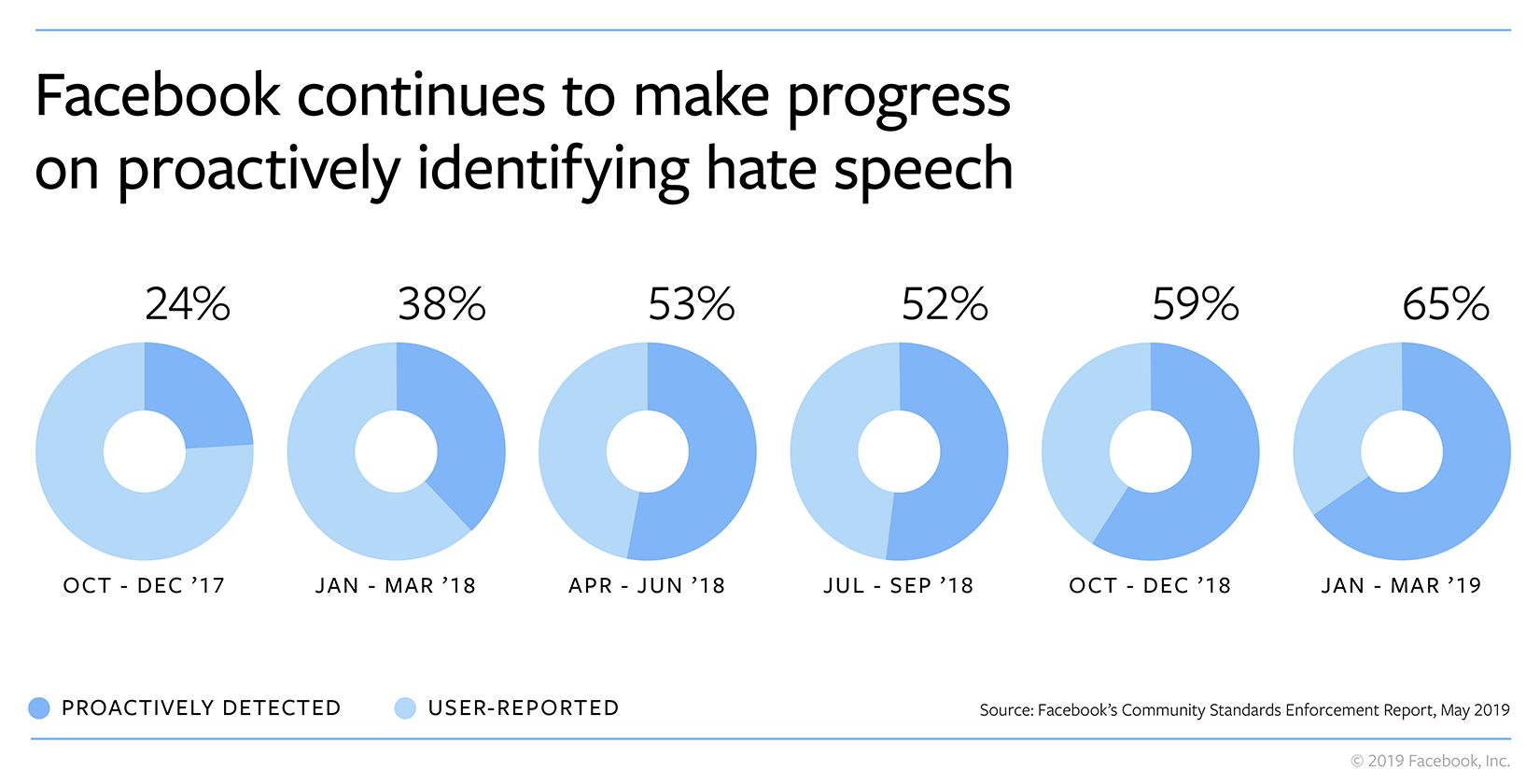

Social media platform Facebook has reported that 65% of the hate speech on its platform was identified and removed before anyone reported it, which is a leap from about 24% in Q4 of 2017.

“In the first quarter of 2019, we took down 4 Mn hate speech posts and we continue to invest in technology to expand our ability to detect this content across different languages and regions,” company said in a media statement.

According to Facebook’s CEO Mark Zuckerberg, the company’s system is capable of detecting hate speech in 40 different languages at this point. Facebook is investing heavily in strengthening its safety systems, with Zuckerberg claiming its safety budget to be more than the Twitter’s whole revenue this year.

Facebook has been using artificial intelligence along with a team of 15K human content reviewers who are spread across the globe and help the company to review content and enforce its community standards.

Justin Osofsky, head of Facebook’s global operations team said, “AI has really changed the game when it comes to detecting violating content. But what it still can’t do well is to understand context, and context is key when evaluating things like hate speech.”

“A slur for instance is often an attack on someone based on race, national origin or sexual orientation — but it can sometimes be a joke, one used self-referentially or could be employed to raise awareness about the bigotry someone has experienced,” he added.

Osofsky said that the company is investing a lot in understanding linguistic and cultural nuance involved in hate speech, and has also trained the Facebook’s AI to learn from the removal history of the human content review team.

Guy Rosen, Facebook’s head of product and engineering team also reiterated the need for a combination of AI and human in detecting hate speech and said, “AI is also not a silver bullet. We need a combination of technology and people, whether it’s the people who report content to us or the reviewers on our teams who review content.”

Commenting on the need government regulations on hate speech, Zuckerberg said, “At the end of the day, our teams are always going to have to make some judgment calls about what we leave up and what we take down.”

“If the rules for the internet were being written from scratch today, I don’t think most people would want private companies to be making so many of these decisions about speech by themselves. Ideally, we believe that this should be part of a democratic process which is why I’ve been calling for new rules and regulations for the Internet,” he added.

2.19 Bn Fake Accounts Removed In Q1 2019

Further, the company claims to have taken down 2.19 Bn fake accounts in the first quarter of 2019, a significant hike from 1.2 Bn accounts in Q4 of 2018.

Facebook had earlier reported 2.38 Bn monthly active users on its platform in Q1 of 2019. However commenting on the vast metric of fake accounts, Zuckerberg has now said, “Most of these fake accounts were blocked within minutes of their creation, before they could do any harm. So they were never considered active in our systems and we don’t count them in any of our overall community metrics.”

“This huge metric of fake accounts is due to an increase in automated attacks by bad actors who try to create large amounts of fake accounts at once,” he added.

To detect fake accounts, the company systems are constantly evaluate the activity that the account is making — whether it’s a time of creation or an active account is performing, in order to try and understand if it’s a fake account that is there really just to post spam.

Zuckerberg said, “The important thing as we think through this is also to balance that with understanding how legitimate users are behaving on our systems.”

Giving an example of this, he added that sometimes real people might sign up and they may behave oddly because perhaps they’re maybe new to the internet or are new to Facebook and hence, may send out a lot of friend requests which can make them look like a spammer.

In April, Facebook was reported to be blocking or removing approximately one million accounts per day. The social media platform removed nearly 700 pages, groups and accounts in India for violating Facebook’s policies on coordinated inauthentic behaviour and spam, right before the India’s 2019 General Elections.

In the same month, company had also removed around 103 pages, groups and accounts on both Facebook and Instagram for engaging in coordinated inauthentic behaviour as part of a network that originated in Pakistan.

Commenting on the importance of releasing the violation prevalence figures, Zuckerberg said, “Understanding the prevalence of harmful content will help companies and governments design better systems for dealing with it and I believe that every major internet service should do this.” The company plans to release similar figures for Instagram too in the next report.