SUMMARY

This is what Facebook and WhatsApp need to do now; their insularity and obsession with profit is tearing apart societies rather than making the world a better place.

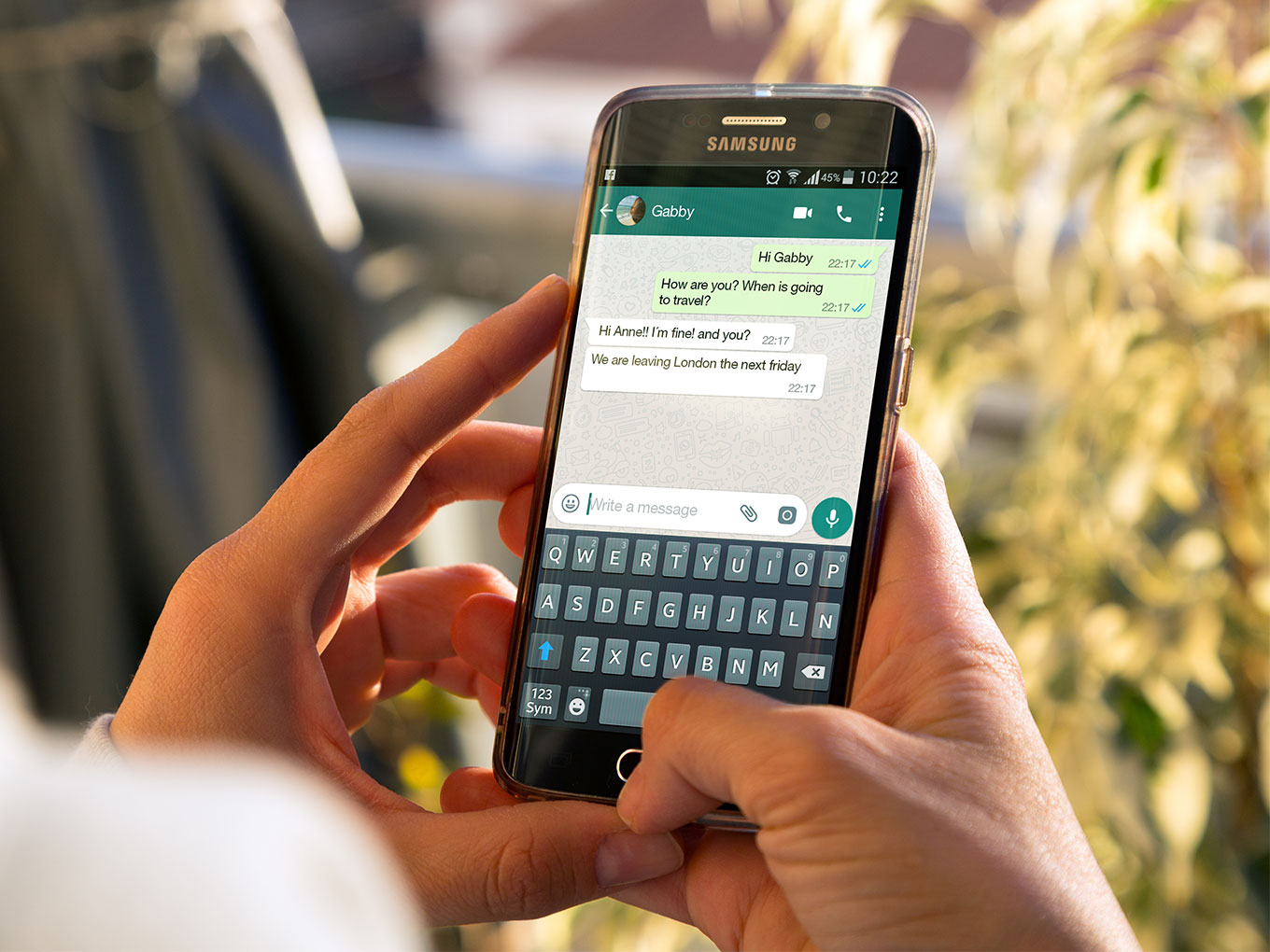

The response by Facebook to the Indian Government’s demand that WhatsApp stops the spread of “irresponsible and explosive messages” was to offer minor enhancements, public education campaigns, and “a new project to work with leading academic experts”.

As it did when the United Nations accused it of having “a determining role” in the genocide against Rohingya refugees, Facebook pleaded ignorance, offered sympathy, and claimed that it was unable to do anything about it.

Just as governments hold product manufacturers responsible for the damage that their defective products do, Facebook needs to be held liable for the two dozen deaths that WhatsApp may have facilitated through its group-chat feature. And it needs to recall the product, rethink, and redesign it.

If it won’t do that, the Lok Sabha needs to add more teeth to the Consumer Protection Bill 2018, which it is about to consider: teeth that increase the penalties and that address defects in product design. And it needs to be broadened to include online platforms.

WhatsApp has just added a small feature, placing the word “Forwarded” to indicate forwarded messages. But that gives no information about the source of the original message. Leave aside the difficulties of villagers: even highly educated users could be misled into thinking a source is credible that is not.

The “Forwarded” flag is simply an excuse for further inaction by WhatsApp and has done nothing to address the problems of shared messages’ untraceability back to their source.

The deeper problem here is something that Band-Aids such as these won’t fix: the technologies’ fundamentally and intentionally manipulative nature.

As detailed in my new book, Your Happiness Was Hacked: Why Tech Is Winning the Battle to Control Your Brain—and How to Fight Back, the technology industry is combining propaganda techniques initially developed by the U.K. government in World War I with addiction strategies perfected by the gambling industry to keep us checking its news feeds, messages, updates, and alerts.

These techniques hark back to the work of psychologist B.F. Skinner, who in the 1930s, put rats in boxes and taught them to push levers to receive food pellets. The rats pushed the levers only when hungry; so, to get the rats to press the lever consistently, he gave them a pellet only some of the time, a technique now known as intermittent variable rewards.

Casinos have used that same technique for decades to keep us pouring money into slot machines. And now the technology industry is using it to keep us checking our smartphones for e-mails, for new followers on Twitter, and for more “likes” on photographs we posted on Facebook. It has a Stanford University professor, B.J. Fogg, to thank for this.

Mike Krieger’s Learning From B.J. Fogg

One result of his influence shows in the work of Mike Krieger, who as a young Stanford student in 2006 enrolled in Professor Fogg’s class on persuasive technology. When Fogg had his students build applications as a class project, Krieger’s response was one that shared photos — and, later, to found, with Kevin Systrom, a photo-sharing social network, Instagram.

Facebook acquired Instagram for $1 billion in 2012. At that time, Instagram hadn’t earnt a dime in revenue. What Instagram had, as Krieger had learnt from his time with Fogg, was the ability to entice and addict its users, some of whom were spending hours a day scrolling through the images posted by others, and still hours more planning the images they wanted to capture and post.

Krieger is Fogg’s most prominent alumnus, but many others in Silicon Valley learned from him how to inculcate habits in their users, including those who took his now famous course focused on Facebook apps in 2007.

In recent interviews, Fogg has expressed misgivings that his findings are being used for profiteering and hoarding human attention in ways not beneficial to people or society. But, as with the work of many other researchers (Einstein prominent among them), his work was easily enough put to uses that far outstripped his initial ideas.

This is the problem with all technologies: They can be used for good and for evil. They can be used in ways that product developers never imagined and create such carnage we are seeing from WhatsApp. Rather than bringing societies together and uplift humanity, they can instead divide and conquer.

The lesson here for entrepreneurs and product developers is to be cognizant of the uses and possible misuses of your technology. When things go wrong, as they have with WhatsApp, don’t hunker down to defend something that is obviously defective, but rethink and reflect, and be prepared to admit a serious error and to go back to the drawing board and create something better.

This is what Facebook and WhatsApp need to do now; their insularity and obsession with profit is tearing apart societies rather than making the world a better place.