SUMMARY

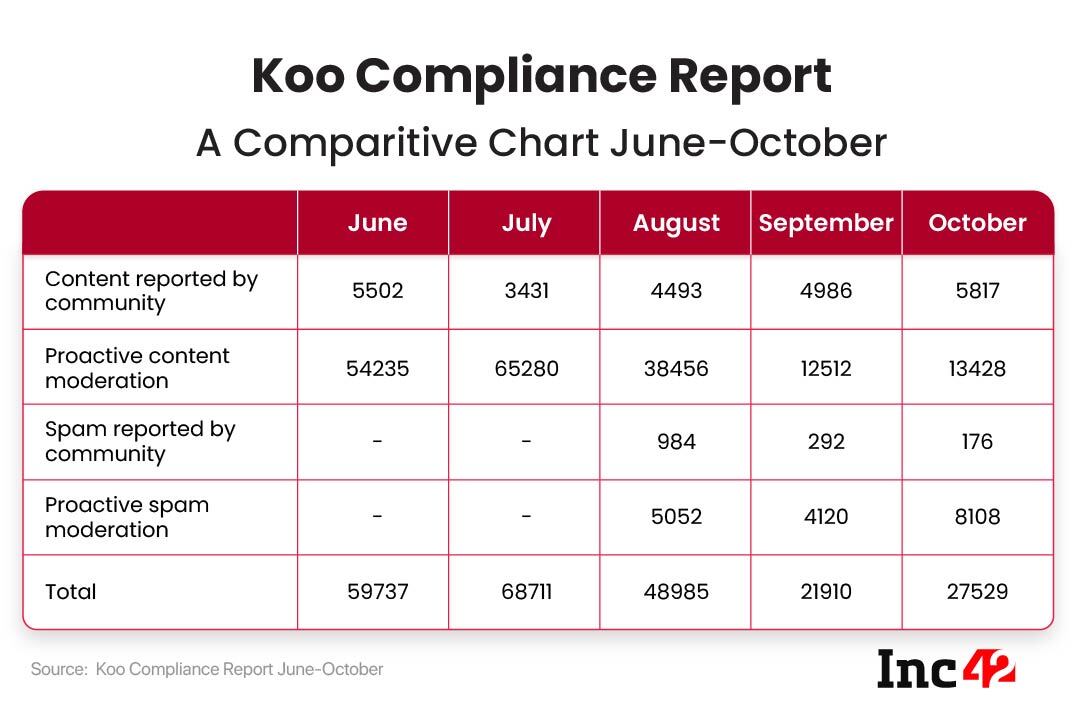

In its fifth compliance report, Koo stated that it hid and/or blacklisted 155 spam accounts based on user reports and 8,042 accounts through self-moderation between October 1 and 31

The total number of content pieces is 21% more than the 22K posts reported in September

Since it became mandatory to publish compliance reports by social media intermediaries with a >5 Mn user base, Koo has moderated over 226K accounts and posts

India’s Twitter alternative Koo has published its fifth compliance report since the New IT Rules came into effect in May 2021. In October, Koo removed 1,977 posts during the month — 1,203 pieces based on content reporting by users, 687 based on proactive moderation, 21 spam content based on user reporting and 66 spam posts/accounts based on proactive moderation.

Further, it also proactively actioned such as overlay, blurring, ignoring, warning and more against 4,614 posts based on user reports, and 12,741 posts through self-moderation.

Until July, the company only revealed content moderation statistics but from August, it has also been specifying the number of spam content/accounts. The platform actioned — hid and/or blacklisted 155 spam accounts based on user reports and 8,042 accounts through self-moderation between October 1 and 31.

The numbers are significantly up from September where it moderated 22K posts. When compared to June, July and August, the numbers are significantly lower.

The compliance report has to be filed under the Intermediary Guidelines and Digital Media Ethics Code, Rules 2021, that came into effect on May 25, 2021.

Founded in March 2020 by Aprameya Radhakrishna and Mayank Bidawatka, Koo is a microblogging platform that is available in eight Indian languages such as Hindi, Kannada, Marathi, among others.

Backed by Tiger Global, Blume Ventures, Mirae Asset Management, 3One4 Capital, Accel, the social media platform is currently valued at around $100 Mn.

At present, Koo boasts over 15 Mn users, with 5 Mn users added in Q2 FY22. The startup has recently also ventured into the Nigerian market and plans to expand across Southeast Asia by H2 CY22.

Its parent company, Bombinate Technologies, also operates the Indian language Q&A platform Vokal.

Social Media Giants Remove 106.9 Mn Accounts/Posts In Q2 FY22

Top social media platforms including Google, Facebook, Instagram and WhatsApp, Twitter and Koo have been publishing monthly compliance reports since it became mandatory in May 2021. Over the third quarter of the financial year 2021-22 (July to September), the above mentioned social media platforms have combined removed/banned/moderated 106.9 Mn content pieces/user accounts.

While the majority of the posts or accounts have been self-moderated by the platform through their in-house AI-ML moderators, a small number was also taken into account as reported by users.

Across the platforms, key categories for user-reported and self-moderated posts were sexual and violent graphic content, spam, hate speech, terrorism and suicidal content, depending on the type of the social media platform.

From July-September 2021, Google moderated over 266K pieces of content.

Meta-owned WhatsApp, on the other hand, banned 6.3 Mn accounts in the said time period, Instagram banned and actioned 8.2 Mn accounts and posts, while Facebook’s total number for July-September was a little under 92 Mn.

Twitter’s account ban numbers stood around 50K, while its homegrown alternative moderated and actioned 139K & 125.4K posts, respectively.